Today, NVIDIA, a market leader in both quantum and metaverse technology, announced some big updates to its products. This announcement was made at SIGGRAPH, one of the biggest global conferences for the computer graphics and animation industries. At the conference, NVIDIA announced three new things: the introduction of neural graphics with AI technology, further investment in Universal Scene Description (USD) for virtual world-building, and finally, the introduction of the Avatar Cloud Engine, which would make avatar production more efficient and easier.

Introducing Neural Graphics

Not everyone who is building a virtual world within the metaverse has the coding experience to do so. This can make the process longer and more challenging, and add barriers to those who are able to participate in this space. To overcome this issue, NVIDIA is releasing a series of plugins to its Omniverse platform that uses neural graphics. Neural graphics leverage AI technology to create a neural network-esque collaboration with regular computer graphic software. The result makes for a more streamlined process that anyone can utilize. An example of this new neural graphic software is NVIDIA’s Omniverse Audio2Face program. This tool can create facial animations for an avatar directly from an audio file via AI technology This saves animators and creators valuable time and cost in developing their creations, and in turn, makes the metaverse more accessible to more people.

A Long-Term Investment in USD

As much of the metaverse’s virtual infrastructure is built using individual scenes, NVIDIA has been investing in Universal Scene Description (USD) to make world-building easier. According to Rev Lebaredian, the Vice President of Omniverse and Simulation Technology for NVIDIA: “Beyond media and entertainment, USD will give artists, designers, developers, and others the ability to work collaboratively across diverse workflows and applications as they build virtual worlds. Working with our community of partners, we’re investing in USD so that it can serve as the foundation for architecture, manufacturing, robotics, engineering, and many more domains.”

In adding to its investment in USD, NVIDIA sees it as part of a long-term strategy. Part of its investment is releasing thousands of USD assets for free to make USD possible for those with no coding experience. These assets can be helpful for animators especially. To create these assets, NVIDIA has been collaborating with Pixar, which created USD originally in 2016. “USD is a cornerstone of Pixar’s pipeline, and it’s seeing rapidly growing momentum an open-source framework across not only VFX animation but now industrial, design and scientific applications,” explained Chief Technology Officer at Pixar Animation Studios, Steve May “NVIDIA’s contributions to help evolve USD as the open foundation of fully interoperable 3D platforms will be a great benefit across industries.”

The Avatar Cloud Engine (ACE)

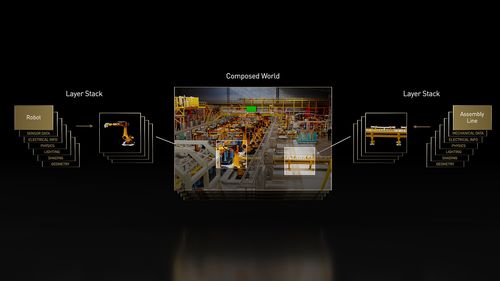

Using AI technology, NVIDIA is also releasing the Avatar Cloud Engine (ACE), a software tool that will make creating avatars and virtual assistants easier and more efficient. The technology is scalable for all businesses, especially when it comes to digital twin technology. Digital twins are virtual representations of places or objects, used in simulations. Many companies use digital twins to help improve efficiency in their supply chains. According to a principal analyst at TIRAS Research Kevin Krewell: “Demand for digital humans and virtual assistants continues to grow exponentially across industries, but creating and sealing them is getting incredibly complex. NVIDIA’s Omniverse Avatar Cloud Engine. brings together all of the AI cloud-based microservices needed to more easily create and deliver lifelike interactive avatars at scale.”

These three updates to NVIDIA’s services will not only help make building in the metaverse easier, they will also help to bring more people into this virtual space.

You may also like: Best Roblox Avatar Ideas: Cool, Cute, Hot And Ugly [2022]

For more market insights, check out our latest metaverse news here.